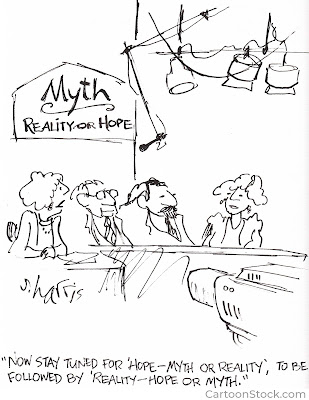

In my discussion of Steven Pinker’s book, Rationality,

I referred to his observation that people tend to have a reality mindset in

the world of immediate experience and a mythology mindset when discussing

issues in the public sphere. Although that is an accurate observation about a

general tendency, delusions are also fairly common in the world of immediate

experience.

The

delusions that most of us experience are fairly harmless. For example, it may

not do you much harm to believe that you are happier than average, even if you

aren’t. That common delusion may help to explain why so many people walk around

with smiles on their faces.

For some

unfortunate people, however, the world of immediate experience includes

delusional beliefs that are symptomatic of mental ill-health. These are

referred to as clinical delusions.

The question I ask above has been prompted by my reading of Lisa Bortolotti’s recent book, Why Delusions Matter. Lisa Bortolotti is a philosopher who specializes in the philosophy of the cognitive sciences, including issues relating to mental illness. She observes that there is a strong overlap between clinical and non-clinical delusional beliefs. The non-clinical delusional beliefs that she discusses include beliefs that Pinker would associate with a mythology mindset.

A

conversation context

Bortolotti notes

that in any discussion between two people, you have a speaker and an

interpreter swapping roles as the conversation proceeds. The speaker says

something and the interpreter listens, making inferences about the speaker’s

beliefs, desires, feelings, hopes and intentions on the basis of the speaker’s

words, facial expression, tone of voice, previous behaviour and so on.

Interpretation

becomes challenging when the interpreter suspects that the speaker may be

delusional. The interpreter rarely has the information needed to assess that

the speaker’s beliefs are false, so falsity cannot be a necessary condition for

attribution of delusionality.

Three

elements are often involved when the interpreter judges the speaker to be

delusional:

- Implausibility: The interpreter finds the speaker’s beliefs to be implausible.

- Unshakeability: Speakers do not give up their beliefs in the face of counterarguments and counterevidence.

- Identity: The beliefs seem important to the image that speakers have of themselves.

Clinical

delusions

Bortolotti

offers what she describes as an “agency-in-context” model to explain clinical

delusions. She explains:

“The

adoption and maintenance of delusional beliefs are due to many factors

combining aspects of who you are and what your story is (your genes, reasoning

biases, personality, lack of scientific literacy, etc.) and aspects of how

epistemic practices operate in the society where you live.”

The

epistemic practices she refers to include what we learn at school about

knowledge acquisition, and the stigma that makes it difficult for people with

delusional beliefs to participate fully in public life.

There is no

doubt that persecutory delusions are harmful to the speaker and others. They

undermine the ability of speakers to respond appropriately to events, and often

erode their relationships with others.

However,

Lisa Bortolotti suggests that it is important for interpreters to understand

that most delusions offer some benefits for speakers. Delusions “let speakers

see the world as they want the world to be; make speakers feel important and

interesting; or give meaning to speakers’ lives, configuring exciting missions

for them to accomplish”.

Interpreters

also need to understand that the underlying problems of speakers don’t

disappear when they obtain insight about their delusions. They may become

depressed when they approach reality without the filter of their delusional

beliefs.

There is

not much to be gained by attempting to reason with people whose beliefs are

unshakeable. Bortolotti suggests that it is

probably more productive for the interpreter and speaker to share

stories rather than exchanging reasons for beliefs. Exchanging stories can show

how delusional beliefs emerged as reactions to situations that were difficult

to manage. While sharing stories, interpreters have opportunities “to practice

curiosity and empathy in finding out more” about underlying problems.

Conspiracy

delusions

From an

interpreter’s viewpoint, a speaker’s beliefs about the existence of

conspiracies often have similar characteristics to clinical delusions. They are

implausible, unshakeable, and closely tied to the speaker’s self-image.

Bortolotti

emphasizes that those who hold conspiracy delusions often claim to have special

knowledge of events – they claim to be experts, or to know who the real experts

are. Identifying as a member of a group is often also important. Non-members

often refer to members of such groups in a derogatory way e.g. QAnon supporters

and anti-vaxxers. However, people are often attracted to conspiracy delusions

promoted by like-minded people whom they trust. The act of sharing a delusional

story can be a signal of commitment to a particular group.

Comments

Lisa Bortolotti’s

book has improved my understanding of delusions in a couple of different ways.

First, it has given me a better appreciation that delusions offer some benefits

to the people who hold them, and those benefits help to explain the

unshakeability of delusional beliefs.

Second,

viewing delusions within the context of a conversation between a speaker and an

interpreter is helpful in drawing attention to the value judgements involved in

assessing whether the speaker’s beliefs are delusional.

My main

criticism of the book is that the author seems to me to be biased in favour of

“the official version” of events, even though she acknowledges that contrary

beliefs are sometimes vindicated. The most obvious example bias is her apparent

reluctance to give credence to the possibility that Covid19 may have originated in a lab in Wuhan.

I am

pleased that my reading of the book did not leave me with the impression

that the author believes that it is delusional to have an unshakeable belief in

the importance of the search for truth. In emphasizing that value judgements

are involved in assessing whether beliefs are delusional, Lisa Bortolotti seems

to me to be providing readers with a better understanding of the meaning

attached to the concept of delusion in clinical and non-clinical settings,

rather than casting doubt on the existence of reality.